The Ultimate Guide to Mobile A/B Testing

Will Lam

7/16/2023

How To Get Started

Mobile app A/B testing (also known as split testing) is the practice of presenting a sample of users with two versions of a screen or in-app experience. There is often a current “A” baseline version an. a “B” variation with some visible change. Product teams track user interactions with each version to determine if one more positively influences user behaviour or engagement than the other.

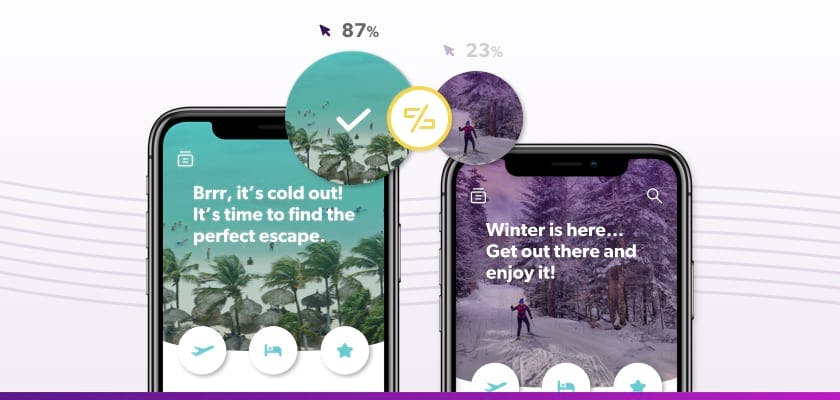

An example of an A/B test of copy and images in a travel app.

While A/B testing has been around since the dawn of the web, running mobile A/B tests have historically been more difficult. Deploying a test used to require engineering resources and app stores updates. Only companies with the resources to build in-house experimentation tools (like Google and Facebook) could quickly run tests and learn what was engaging users before the competition.

Today, there are tons of A/B testing platforms that help brands quickly see what is driving user engagement, retention, and conversions faster. While running mobile A/B tests may be easier than ever before, it's hard to know where to start and how to incorporate app A/B testing into your product development process.

We’ve put together this guide to help mobile developers optimize theirr products as quickly as possible through mobile A/B testing.

Choosing Software Solutions

Before you can start experimenting, you’ll need a way to administer mobile A/B tests. There are two ways to do this:

- Build your own internal tool

- Buy a solution from a trusted provider

Today, there are tons of A/B testing platforms on the market—which makes building internal tools less cost-effective and necessary than it was a few years ago. Many only require a simple SDK install to start using on any programming language. The path you choose depends on the goals you set for your program, the types of tests you’d like to run, the engineering resources you have, and how quickly you’d like to get started.

There are supplementary tools that can help you better understand your mobile user’s journey. Here are some of our recommendations for building the best mobile testing tech stack:

- DevCycle – A feature management platform with an in-depth experimentation and A/B testing suite providing product and engineering teams with the analytics necessary for optimization.

- mParticle – A single platform for collecting and storing user data

- Amplitude – In-app analytics and event tracking

- Google Big Query – Analytics data warehouse

- Instabug - In-app feedback collection and bug reporting

- Looker – BI data visualization and analysis

Question: Do I need a statistics background to run A/B tests? 🤓

Answer: You may wonder if you need to be an expert in mathematical concepts like p-values, Bayesian vs. Frequentist testing, or Z-scores and t-scores before you start A/B testing. While you should want valid test results, don’t let not having a statistics degree slow you down. Mobile A/B testing vendors should have things like statistical significance (or chance of beating baseline) baked into their products.

If you want to learn more, here are some articles and tools to help you get started:

A/B Testing Statistics Explainers:

- A/B Testing Statistics Made Simple

- The Complete Guide To Statistical Significance

- A/B Testing Statistics: An Intuitive Guide For Non-Mathematicians

Statistical Significance & Sample Size Calculators:

- ABBA Calculator - Confidence calculator for binomial experiments.

- A/B Test Guide - Robust, flexible statistical significance calculator.

- Evan’s Awesome A/B Tools - A number of calculators for various types of A/B tests.

- Sample Size Calculator - A sample size calculator and other related resources.

Hit the Ground Running With This Framework

Executing mobile A/B tests often follows this process:

- Ideation: Test ideas are brainstormed with cross-functional teams and inputs. Then, they are prioritized. Some are assigned for production, while others enter a backlog for later.

- Creation: The elements needed for the test are pulled together, such as copy, images, and graphics, or code-based variations. Sample size and test length are calculated. Goals are solidified, and results tracking is set up (if it hasn’t been already or new event type is being tracked)

- Launch & Monitoring: The test goes live to users. Early results may be monitored to watch for overwhelmingly negative impacts on the user experience or other errors.

- Reporting: When the test ends, results are reviewed for insights. These results are shared and communicated with appropriate parties.

- Iteration: Learnings are used to either refine or re-run the test, create new test ideas, or push product changes lives. A/B test results can also be used to iteratively influence the product roadmap.

Resources to help you at each stage of the mobile A/B testing process:

- Ideate: 5 Ways to Generate Impactful Experiment Ideas

- Creation: Mobile A/B Testing Ideas That Drive ROI at Every Stage of the User Funnel

- Launch & Monitoring: Best Practices for Launching Your First Mobile A/B Tests

- Reporting: A/B Testing Data Pitfalls to Avoid

- Iteration: How to Use A/B Testing for Better Product Design

Build an Internal Culture Around Optimization

While it’s trendy to pay lip service to principles like “test don’t guess” or “move fast and break things,” it can be hard to get your organization rapidly experimenting and optimizing your app—especially when it runs against the way things have been done for years.

Here are five steps you can take to create an experimentation culture:

- Get buy-in - Pull together case studies, stats, and infographics that show the benefits of A/B testing, and share how the process works so teams are educated and bought into the change.

- Create a consistent testing plan and process - Propose a process and build an experimentation roadmap to track test progress, collect ideas, and schedule your test backlog. Set a regular cadence for testing so it becomes a consistent part of your team’s patterns and thinking. Share your roadmap with others in your organization for added visibility and collaboration.

- Start small and set realistic expectations - Don’t aim to impact your most important key metrics first. Instead, come up with small, focused tests (like moving a screen or adding in a “skip” button) that may impact critical metrics (like sign-ups or purchases) over-time. Establish your time frames and success measures before launching a test.

- Regularly analyze test results and communicate with others - Schedule time to look at test outcomes and establish methods or channels for sharing insights with the key teams so they can implement changes accordingly.

- Schedule quarterly meet-ups for ideation & reviewing data - Have a quarterly meeting to sync on testing priorities and review high-level experimentation insights with key stakeholders from across your organization. This will help you make an effective testing plan, maintain internal buy-in for A/B testing, and prioritize your experiment roadmap.

A Note On “Inconclusive” or “Negative” A/B Testing Results 🧐 Not all mobile A/B tests have clear winners, or drive noticeable spikes in revenue or adoption. Continuously testing small changes is the best way to optimize your app without negatively impacting important metrics along the way. Sometimes, testing is about learning what not to launch—so share learnings from the tests that cause “failures” as well as the winners.

How To Engage & Retain Users

Engagement and retention are the ultimate goals for mobile apps. Using the “AARRR” (AKA Pirate Metrics) mobile app funnel model to come up with test ideas can help you build a stickier product at every stage of the user journey.

Here are some A/B test suggestions for each stage:

- Acquisition - When users first enter your app, it’s critical you run A/B tests that drive users to take essential actions, like completing the sign-up process and opting-into location services and push notifications. Without this, pulling inactive users back into your app later will be tough.

- Activation - Getting users to explore your app, use key features and have “a-ha” moments must happen early on, so users see value and build a habit around using your app. Test how CTAs, menus, and educational messages are impacting adoption of features that correlate with long-term engagement rates (like adding friends, making a wish list, sharing content, etc.).

- Retention - Here, you’ll want to test how notification timing and relevant content or product recommendations drive users back into your app. Consider using days since last login, abandoned cart data, and other in-app activity (or engagement with marketing campaigns—where possible through integrations) to test what brings people back to your app.

- Referral - To create a virtuous cycle of acquisition through your users, A/B test how and—perhaps more importantly—when you request user write reviews, use referral codes, or share a link to your app on social media to drive new sign-ups.

- Revenue - To increase the odds of multiple sales, upgrades, or increases in average order values, you’ll want to A/B test check-out flows, pricing pages, and special or offer messages. You can also optimize for added spend by testing out how social proof from other users or your recommendation engine influences purchases.

App Case Studies, Ideas & Examples

When first building out your testing process, it’s helpful to learn from what’s worked for others, no matter their industry or app type.

Here are some A/B testing case studies and examples from cutting-edge companies to inspire you:

- A/B Testing Examples from Duolingo’s Master Growth Hacker: Gina Gotthilf helped grow Duolingo from 3 million to 200 million users. In this blog, she shares four of her all-time favorite mobile A/B tests that helped get them there.

- How Pinterest Uses A/B Testing (Not SEO) to Drive User Acquisition & Retention: Pinterest’s premier growth hacker, Casey Winters, shares his advice on evolving a process for growth experimentation using examples from Pinterest’s top A/B tests.

- How to Experiment like Facebook and Netflix By Adopting the “10,000 Experiment Rule”: See how Netflix and Facebook run thousands of experiments to improve their apps (including a look at Facebook’s Airlock framework), plus examples from each.

- Good UI Leaks: This frequently updated blog is a repository of recent tests brands like Airbnb, Booking.com and more are running.

- Five High-Impact, Visual Experiments You Can Launch in a Day: Five examples of code-free tests you can run to optimize your app fast.

More Resources & People to Follow

Since experimentation is an ever-evolving process, here are some top folks in the space to follow so you can keep your mobile app development skills up-to-date:

- Andrewchen.co by Andrew Chen (@andrewchen)

- Ben-evans.com by Benedict Evans (@BenedictEvans)

- Black Box of Product Management by Brandon Chu (@BrandonMChu)

- Reforge by Brian Balfour (@bbalfour)

- Casey Accidental by Casey Winters (@onecaseman)

- Lukew.com by Luke Wroblewski (@lukew)

- Noteworthy by Sam DeBrule (@SamDeBrule)

- Product Manager HQ by Kevin Lee (@kevinleeme)

- Product Habits by Hiten Shah (@hnshah)

- The Looking Glass by Julie Zhuo (@joulee)

Written By

Will Lam